In a previous article we covered all the details necessary to start using unit testing on a real-world project. That was enough knowledge to get started and tackle just about any codebase. Eventually you might have found yourself doing a lot of typing, writing redundant tests, or having a frustrating time interfacing with some libraries and still trying to write unit tests. Mock objects are the final piece in your toolkit that allow you to test like a pro in just about any codebase.

Testing Code

The purpose of writing unit tests is to verify the code does what it’s supposed to do. How exactly do we go about checking that? It depends on what the code under test does. There are three main things we can test for when writing unit tests:

- Return values. This is the easiest thing to test. We call a function and verify that the return value is what we expect. It can be a simple boolean, or maybe it’s a number resulting from a complex calculation. Either way, it’s simple and easy to test. it doesn’t get any better than this.

- Modified data. Some functions will modify data as a result of being called (for example, filling out a vertex buffer with particle data). Testing this data can be straightforward as long as the outputs are clearly defined. If the function changes data in some global location, then it can be more complicated to test it or even find all the possible places that can be changed. Whenever possible, pass the address of the data to be modified as an input parameter to the functions. That will make them easier to understand and test.

- Object interaction. This is the hardest effect to test. Sometimes calling a function doesn’t return anything or modify any external data directly, and it instead interacts with other objects. We want to test that the interaction happened in the order we expected and with the parameters we expected.

Testing the first two cases is relatively simple, and there’s nothing you need to do beyond what a basic unit testing-framework provides. Call the function and verify values with a CHECK statement. Done. However, testing that an object “talks” with other objects in the correct way is much trickier. That’s what we’ll concentrate on for the rest of the article.

As a side note, when we talk about object interaction, it simply refers to parts of the code calling functions or sending messages to other parts of the code. It doesn’t necessarily imply real objects. Everything we cover here applies as well to plain functions calling other functions.

Before we go any further, let’s look at a simple example of object interaction. We have a game entity factory and we want to test that the function CreateGameEntity() finds the entity template in the dictionary and calls CreateMesh() once per each mesh.

TEST(CreateGameEntityCallsCreateMeshForEachMesh)

{

EntityDictionary dict;

MeshFactory meshFactory;

GameEntityFactory gameFactory(dict, meshFactory);

Entity* entity = gameFactory.CreateGameEntity(gameEntityUid);

// How do we test it called the correct functions?

}

We can write a test like the one above, but after we call the function CreateGameEntity(), how do we test the right functions were called in response? We can try testing for their results. For example, we could check that the returned entity has the correct number of meshes, but that relies on the mesh factory working correctly, which we’ve probably tested elsewhere, so we’re testing things multiple times. It also means that it needs to physically create some meshes, which can be time consuming or just need more resources than we want for a unit test. Remember that these are unit tests, so we really want to minimize the amount of code that is under test at any one time. Here we only want to test that the entity factory does the right thing, not that the dictionary or the mesh factory work.

Introducing Mocks

To test interactions between objects, we need something that sits between those objects and intercepts all the function calls we care about. At the same time, we want to make sure that the code under test doesn’t need to be changed just to be able to write tests, so this new object needs to look just like the objects the code expects to communicate with.

A mock object is an object that presents the same interface as some other object in the system, but whose only goal is to attach to the code under test and record function calls. This mock object can then be inspected by the test code to verify all the communication happened correctly.

TEST(CreateGameEntityCallsCreateMeshForEachMesh)

{

MockEntityDictionary dict;

MockMeshFactory meshFactory;

GameEntityFactory gameFactory(dict, meshFactory);

dict.meshCount = 3;

Entity* entity = gameFactory.CreateGameEntity(gameEntityUid);

CHECK_EQUAL(1, dict.getEntityInfoCallCount);

CHECK_EQUAL(gameEntityUid, dict.lastEntityUidPassed);

CHECK_EQUAL(3, meshFactory.createMeshCallCount);

}

This code shows how a mock object helps us test our game entity factory. Notice how there are no real MeshFactory or EntityDictionary objects. Those have been removed from the test completely and replaced with mock versions. Because those mock objects implement the same interface as the objects they’re standing for, the GameEntityFactory doesn’t know that it’s being tested and goes about business as usual.

Here are the mock objects themselves:

struct MockEntityDictionary : public IEntityDictionary

{

MockEntityDictionary()

: meshCount(0)

, lastEntityUidPassed(0)

, getEntityInfoCallCount(0)

{}

void GetEntityInfo(EntityInfo& info, int uid)

{

lastEntityUidPassed = uid;

info.meshCount = meshCount;

++getEntityInfoCallCount;

}

int meshCount;

int lastEntityUidPassed;

int getEntityInfoCallCount;

};

struct MockMeshFactory : public IMeshFactory

{

MockMeshFactory() : createMeshCallCount(0)

{}

Mesh* CreateMesh()

{

++createMeshCallCount;

return NULL;

}

};

Notice that they do no real work; they’re just there for bookkeeping purposes. They count how many times functions are called, some parameters, and return whatever values you fed them ahead of time. The fact that we’re setting the meshCount in the dictionary to 3 is how we can then test that the mesh factory is called the correct number of times.

When developers talk about mock objects, they’ll often differentiate between mocks and fakes. Mocks are objects that stand in for a real object, and they are used to verify the interaction between objects. Fakes also stand in for real objects, but they’re there to remove dependencies or speed up tests. For example, you could have a fake object that stands in for the file system and provides data directly from memory, allowing tests to run very quickly and not depend on a particular file layout. All the techniques presented in this article apply both to mocks and fakes, it’s just how you use them that sets them apart from each other.

Mocking Frameworks

The basics of mocking objects are as simple as what we’ve seen. Armed with that knowledge, you can go ahead and test all the object interactions in your code. However, I bet that you’re going to get tired quickly from all that typing every time you create a new mock. The bigger and more complex the object is, the more tedious the operation becomes. That’s where a mocking framework comes in.

The basics of mocking objects are as simple as what we’ve seen. Armed with that knowledge, you can go ahead and test all the object interactions in your code. However, I bet that you’re going to get tired quickly from all that typing every time you create a new mock. The bigger and more complex the object is, the more tedious the operation becomes. That’s where a mocking framework comes in.

A mocking framework lets you create mock objects in a more automated way, with less typing. Different frameworks use different syntax, but at the core they all have two parts to them:

A semi-automatic way of creating a mock object from an existing class or interface.

A way to set up the mock expectations. Expectations are the results you expect to happen as a result of the test: functions called in that object, the order of those calls, or the parameters passed to them.

Once the mock object has been created and its expectations set, you perform the rest of the unit test as usual. If the mock object didn’t receive the correct calls the way you specified in the expectations, the unit test is marked as failed. Otherwise the test passes and everything is good.

GoogleMock

GoogleMock is the free C++ mocking framework provided by Google. It takes a very straightforward implementation approach and offers a set of macros to easily create mocks for your classes, and set up expectations. Because you need to create mocks by hand, there’s still a fair amount of typing involved to create each mock, although they provide a Python script that can generate mocks automatically from from C++ classes. It still relies on your classes inheriting from a virtual interface to hook up the mock object to your code.

This code shows the game entity factory test written with GoogleMock. Keep in mind that in addition to the test code, you still need to create the mock object through the macros provided in the framework.

TEST(CreateGameEntityCallsCreateMeshForEachMesh)

{

MockEntityDictionary dict;

MockMeshFactory meshFactory;

GameEntityFactory gameFactory(dict, meshFactory);

EXPECT_CALL(dict, GetEntityInfo())

.Times(1)

.WillOnce(Return(EntityInfo(3));

EXPECT_CALL(meshFactory, CreateMesh())

.Times(3);

Entity* entity = gameFactory.CreateGameEntity(gameEntityUid);

}

MockItNow

This open-source C++ mocking framework written by Rory Driscoll takes a totally different approach from GoogleMock. Instead of requiring that all your mockable classes inherit from a virtual interface, it uses compiler support to insert some code before each call. This code can then call the mock and return to the test directly, without ever calling the real object.

From a technical point of view, it’s a very slick method of hooking up the mocks, but the main advantage of this approach is that it doesn’t force a virtual interface on classes that don’t need it. It also minimizes typing compared to GoogleMock. The only downside is that it’s very platform-specific implementation, and the version available only supports Intel x86 processors, although it can be re-implemented for PowerPC architectures.

Problems With Mocks

There is no doubt that mocks are a very useful tool. They allow us to test object interactions in our unit tests without involving lots of different classes. In particular, mock frameworks make using mocks even simpler, saving typing and reducing the time we have to spend writing tests. What’s not to like about them?

The first problem with mocks is that they can add extra unnecessary complexity to the code, just for the sake of testing. In particular, I’m referring to the need to have a virtual interface that objects are are going to be mocked inherit from. This is a requirement if you’re writing mocks by hand or using GoogleMock (not so much with MockItNow), and the result is more complicated code: You need to instantiate the correct type, but then you pass around references to the interface type in your code. It’s just ugly and I really resent that using mocks is the only reason those interfaces are there. Obviously, if you need the interface and you’re adding a mock to it afterwards, then there’s no extra complexity added.

If the complexity and ugliness argument doesn’t sway you, try this one: Every unnecessary interface is going to result in an extra indirection through a vtable with the corresponding performance hit. Do you really want to fill up your game code with interfaces just for the sake of testing? Probably not.

But in my mind, there’s another, bigger disadvantage to using mock frameworks. One of the main benefits of unit tests is that they encourages a modular design, with small, independent objects, that can be easily used individually. In other words, unit tests tend to push design away from object interactions and more towards returning values directly or modifying external data.

A mocking framework can make creating mocks so easy, to the point that it doesn’t discourage programmers from creating a mock object any time they think of one. And when you have a good mocking framework, every object looks like a good mock candidate. At that point, your code design is going to start looking more like a tangled web of objects communicating in complex ways, rather than simple functions without any dependencies. You might have saved some typing time, but at what price!

When to Use Mock Frameworks

That doesn’t mean that you shouldn’t use a mocking framework though. A good mocking framework can be a lifesaving tool. Just be very, very careful how you use it.

The case when using a mocking framework is most justified when dealing with existing code that was not written in unit testing in mind. Code that is tangled together, and impossible to use in isolation. Sometimes that’s third-party libraries, and sometimes it’s even (yes, we can admit it) code that we wrote in the past, maybe under a tight deadline, or maybe before we cared much about unit tests. In any case, trying to write unit tests that interface with code not intended to be tested can be extremely challenging. So much so, that a lot of people give up on unit tests completely because they don’t see a way of writing unit tests without a lot of extra effort. A mocking framework can really help in that situation to isolate the new code you’re writing, from the legacy code that was not intended for testing.

Another situation when using a mocking framework is a big win is to use as training wheels to get started with unit tests in your codebase. There’s no need to wait until you start a brand new project with a brand new codebase (how often does that happen anyway?). Instead, you can start testing today and using a good mock framework to help isolate your new code from the existing one. Once you get the ball rolling and write new, testable code, you’ll probably find you don’t need it as much.

Apart from that, my recommendation is to keep your favorite mocking framework ready in your toolbox, but only take it out when you absolutely need it. Otherwise, it’s a bit like using a jackhammer to put a picture nail on the wall. Just because you can do it, it doesn’t mean it’s a good idea.

Keep in mind that these recommendations are aimed at using mock objects in C and C++. If you’re using other languages, especially more dynamic or modern ones, using mock objects is even simpler and without many of the drawbacks. In a lot of other languages, such as Lua, C#, or Python, your code doesn’t have to be modified in any way to insert a mock object. In that case you’re not introducing any extra complexity or performance penalties by using mocks, and none of the earlier objections apply. The only drawback left in that case is the tendency to create complex designs that are heavily interconnected, instead of simple, standalone pieces of code. Use caution and your best judgement and you’ll make the best use of mocks.

This article was originally printed in the June 2009 issue of Game Developer.

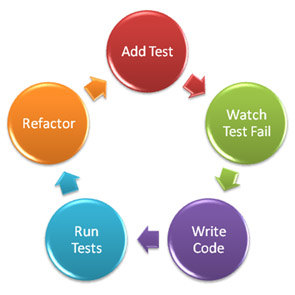

By giving up on TDD for high-level code, you’ll be missing out on the main benefit of TDD: designing better code. I’ve said this many times, but it bears repeating: TDD is not a testing technique. It’s a design technique. You get lots of benefits from applying TDD

By giving up on TDD for high-level code, you’ll be missing out on the main benefit of TDD: designing better code. I’ve said this many times, but it bears repeating: TDD is not a testing technique. It’s a design technique. You get lots of benefits from applying TDD  Every developer who’s been working on a team for a while is able to tell the author of a piece of code just by looking at it. Sometimes it’s even fun to do a forensic investigation and figure out not just the original author, but who else modified the source code afterwards.

Every developer who’s been working on a team for a while is able to tell the author of a piece of code just by looking at it. Sometimes it’s even fun to do a forensic investigation and figure out not just the original author, but who else modified the source code afterwards.